Our past interrogation of the Voynich Manuscript has deconstructed its esoteric symbols into a form more suitable for our ends, subjected its statistical properties to comparison with more mundane texts, and unearthed its hidden internal structures via the esoteric process of topic modelling. In this final post, we will build on the structures revealed in earlier posts to ask how, if at all, the Voynich Manuscript’s textual properties shift within the text itself. Are there significant discontinuities in the writing, indicating a separation of the manuscript into meaningful sections? Or is the text merely a homogenous mass more suggestive of a rote, mechanical, generative procedure?

To address this question we will delve once more into the arcana of machine learning, and draw out the technique of changepoint analysis. This procedure aims to identify one or more points in a series of observations at which the underlying process that generates the data has somehow altered.

Once more, we will operate within the warm embrace of Bayesian statistics and exploit the Stan modelling language as our means to cast light into the darkness1.

On Shifting Sands

Changepoint analysis is an active field of endeavour, with deep subtleties in its application. For the purposes of this analysis we will focus on the comparatively simple problem of identifying a single changepoint amongst the teeming mass of the Voynich Manuscript’s strangely compelling glyphs.

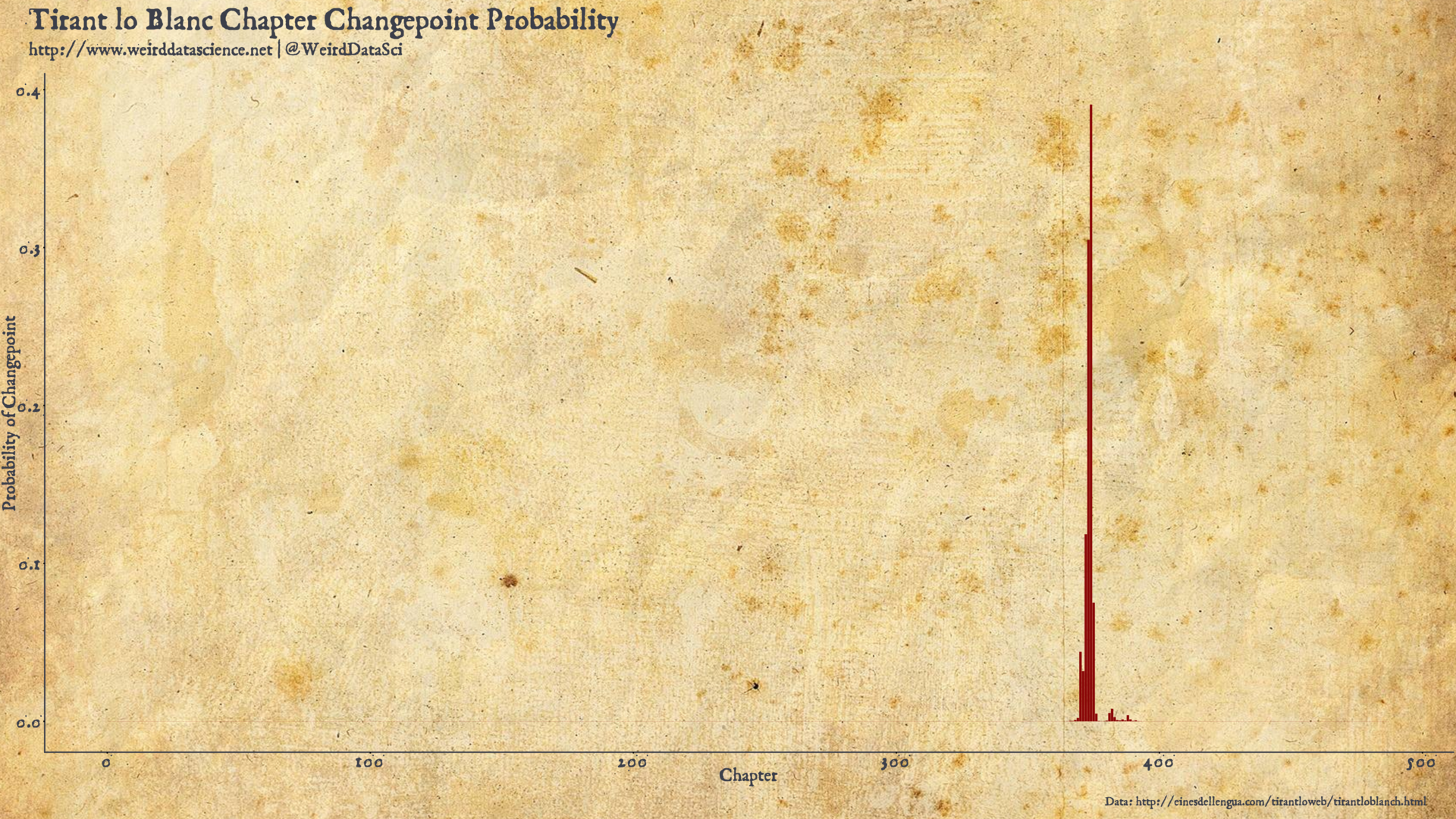

Statistical analysis of changepoints has been applied to a number of historical texts. To provide a first, tantalising glimpse into a world characterised by authorship analysis and the dark arts of adversarial stylometry, we begin by reproducing the work of Riba and Ginebra in ascertaining the existence of a shift in authorship in the 15th century Catalan chivalric romance Tirant lo Blanc.

Briefly, Tirant lo Blanc was written by Joanot Martorel, a Valencian knight, whose untimely death left the manuscript unfinished. The work was completed and published by Martí Joan de Galba. The specific nature of his contributions have, however, been the subject of some debate: did he substantially compose parts of the text, or simply arrange and edit the work?

Riba and Ginebra proposed, in 2005, to identify any stylistic change in the work through a Bayesian analysis of the frequency of word lengths in the document. Their approach, built on a tradition of such analyses in the stylometry literature, relies on the fact that differing authors, even when attempting to mimic the style of another, unconsciously make different word choices. Most importantly, the relative frequency of shorter context free words is likely to differ between authors.

From a certain analytical perspective, each word in a sequence of text can be represented merely by its length, as in the analyses of our earlier posts. Taking this view to a probabilistic extreme, a text, therefore, can be considered as a sequence of draws from a categorical distribution of word lengths. We may consider the length of each word as resulting from rolls of some abstract, biased die with a number of sides equal to the number of possible word lengths in the prose. The full text, therefore, is itself a multinomial distribution in which the number of categories matches the possible lengths of words observed in the document, and the number of trials is the number of words in the text. The stylistic differences between authors, therefore, is known to be revealed most strongly in the relative frequency of the shorter words.

Having descended thus far, and with dark suspicions of an authorship change disrupting the contiguity of Martorel’s opus, we hypothesise that the entire volume may best be described by not one, but by two multinomial distributions over short word lengths: one for the earlier text of the original author, with a second describing the later contributions of his posthumous collaborator. The point of division between these distributions is the changepoint.

Winds of Change

To drag this concept from its abstract formulation to a tangible realisation, we turn to the Stan probabilistic programming language, The models here draw heavily on the Stan user’s guide changepoint section, which lays out the concepts underlying these approaches with horrifying clarity.

Perhaps the most unusual aspect of implementing this model is due to Stan’s inability to sample discrete parameters; in this case the location of the changepoint. As such the model must conceal a latent discrete parameter cunningly hidden in its construction. This may then be marginalised out to reveal the probability of each value of the latent parameter. The model we construct here, therefore, will provide us not simply with a point estimate of the most likely changepoint, but a set of probabilities for each possible changepoint.

As cryptically hinted above, our model of the Voynich text abstracts its prose to counts of word lengths found on each folio of the manuscript, resulting in a multinomial distribution of word lengths. For the changepoint, we hypothesise that there are not one, but two multinomials with different parameters falling on either side of some changepoint. Our goal is to identify the pivotal folio at which this underlying shift most likely occurs.

Representing this more formally, in the style used for generative Bayesian models, we can write the distribution of word lengths, \(\Omega\), in terms of its hyperparameters as:

$$\begin{eqnarray}\

\Omega &\sim& \mathbf{Multinomial}( t < c~?~\theta_e : \theta_l )\\

\theta_e &\sim& \mathbf{Dirichlet}(\alpha)\\

\theta_l &\sim& \mathbf{Dirichlet}(\alpha)\\

c &\sim& \mathbf{Uniform}(0, 1)

\end{eqnarray}$$

The most crucial element for isolating the changepoint is the conditional operator in the first line. We treat the frequency of words of different lengths on a given folio as one point in a sequence, indexed by the value \(t\). The conditional statement encodes that observed word lengths prior to some unknown point \(t = c\) are drawn from a multinomial with one vector of parameters, \(\theta_e\); from that folio onwards, word lengths are drawn according to a second multinomial with parameter vector \(\theta_l\). When appropriately constructed, fitting the model produces a posterior distribution across the various possible \(\theta_e\) and \(\theta_l\) parameters for all possible changepoints. The folio at which the posterior probability of \(c\) is highest is our best estimate of the changepoint, and is accompanied by estimates of \(\theta_e\) and \(\theta_l\) around that changepoint.

As mentioned above, we marginalise the discrete changepoint parameter, \(c\), rather than sampling it directly. A full example of the concept, applied to a Poisson distribution, is given by the Stan Users’ Guide changepoint section. This key step moves the parameter \(c\) from the full joint probability function of the model, resulting in a likelihood of word lengths according to the parameters \(\theta_e\) and \(\theta_l\), which can be calculated in Stan by summing over this likelihood for all possible values of \(c\)2.

Reproducing, with only slight adaptations, the original Poisson example, our full joint probability would be:

$$p(\theta_e, \theta_l, c, \Omega) =

p(\theta_e)p(\theta_l)p(c)p(\Omega|\theta_e,\theta_l,c)$$

Marginalising out \(c\), this can be represented as:

$$\begin{eqnarray}

p(\Omega|\theta_e, \theta_l) &=& \sum_{c=1}^Tp(c,\Omega|c,\theta_e,\theta_l)\\

&=& \sum_{c=1}^Tp(c)p(\Omega|c,\theta_e,\theta_l)

\end{eqnarray}$$

The result is that our Stan model can be constructed by sampling across values of \(\theta_e\) and \(\theta_l\) for all possible values of \(c\). Due to the requirement to sum across all possible values of the discrete paramete, however, this subterfuge of marginalisation is restricted in general to bounded discrete parameters.

We can distill the above into a Stan model as given below.

To launch the model in gentler waters, we apply it to Martorel and de Galba’s Tirant lo Blanc to discover where, if at all, de Galba’s major contributions to the text begin, and see the extent to which our Stan rendering reproduces the results of Riba and Ginebra’s analysis. As in their work we will focus only on those words with length shorter than 5 letters, where the key stylistic difference between authors makes itself apparent.

We place a relative uninformative Dirichlet prior on the multinomial \(\theta_e\) and \(\theta_l\) parameters, with the vector of \(\alpha\) values set to one. This results in a uniform distribution across all possible simplexes. Note that this does not push the multinomial towards a uniform simplex, but instead that all possible simplexes are equally likely. \(\alpha \ge 1\) produces increasingly uniform simplexes; \(0 \le \alpha \le 1\) produces simplexes in which the probability mass is more likely to be concentrated in some given element.

With the resulting data and model in unison, we can see the results of this analytic process.

Our multinomial automaton suggests a location for a significant stylistic changepoint in Tirant lo Blanc with the main concentration of probability mass around chapter 374. Perhaps unsurprisingly, but pleasingly, this is in close agreement with the earlier analysis of Riba and Ginebra, who placed their estimates between chapters 371 and 382.

Nos et mutamur in illis

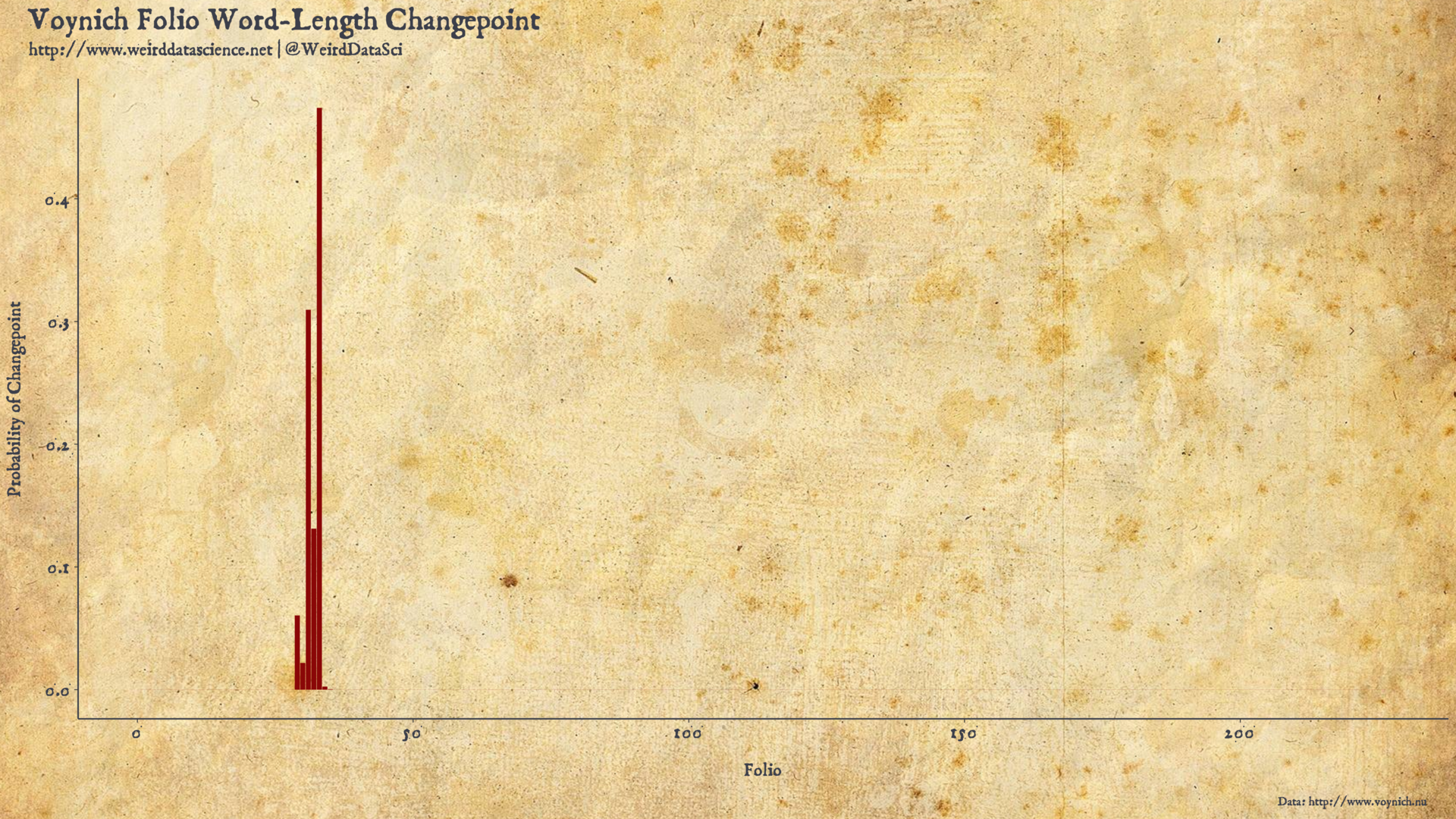

The changepoint model realises the dark imaginings of our predecessors. What horrors might it reveal when applied to the Voynich Manuscript? Following the logic of the above analysis, we will focus initially on the shorter words in the Voynich corpus.

To improve model fit we will place a little more information in the prior, setting the \(\alpha\) values for the Dirichlet prior to 0.6 to push the multinomial towards more concentrated probabilities. This reflects the gamma distribution of word length frequencies discussed in our earlier analysis. We also combine all single- and two-letter Voynich terms into a single category due to the small number of words falling into these categories3.

The model can now reveal where, if at all, a likely fracture resides in the textual assemblage of the Voynich Manuscript.

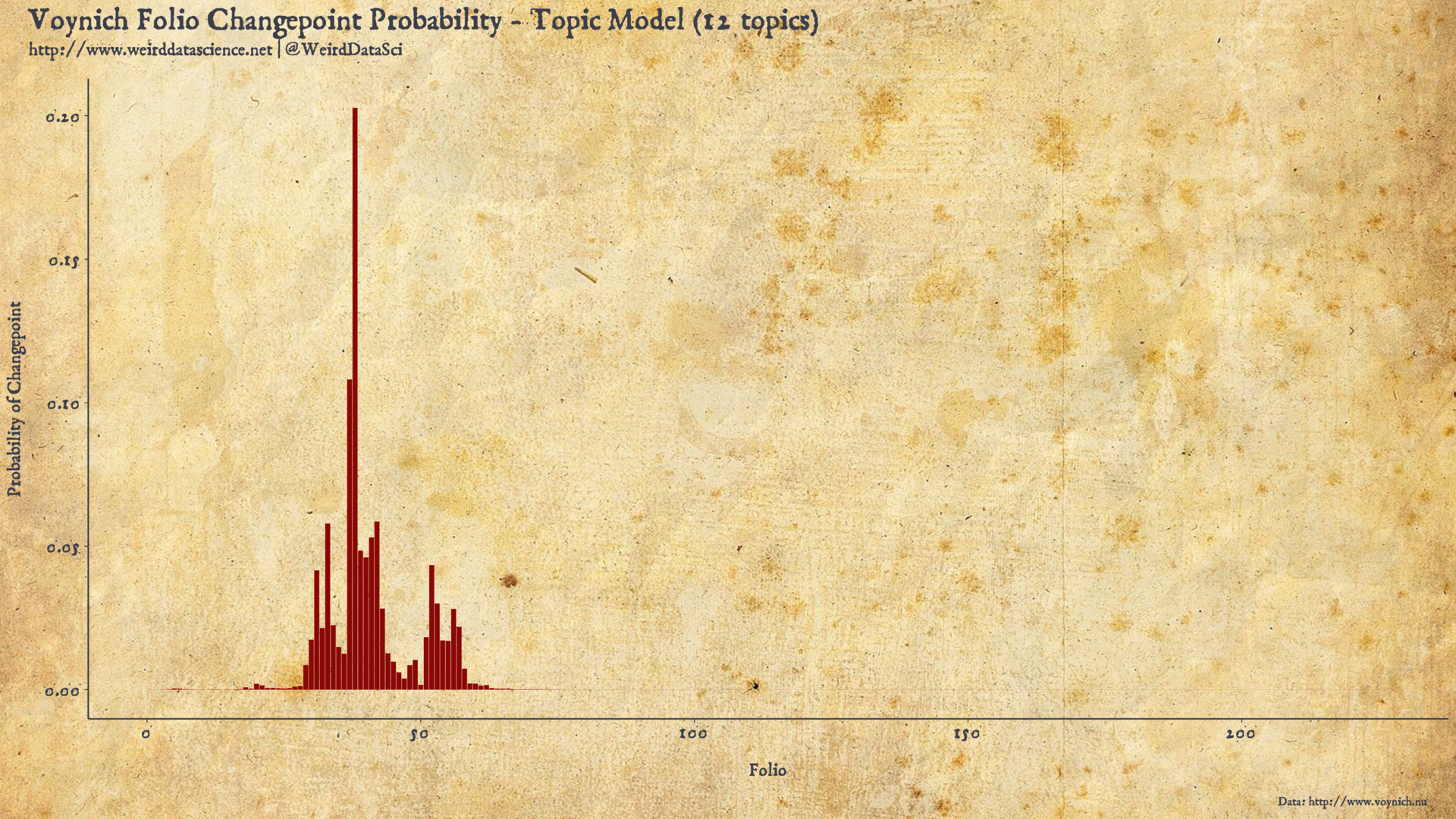

Our model appears to identify a potential changepoint in the frequency of short words in the Voynich Manuscript somewhere around Folio 33.

In contrast to the analysis of Tirant lo Blanc, we have no a priori suspicion that multiple authors were involved in the creation of the Voynich Manuscript. As such, we may hypothesise this changepoint as reflecting a simple stylistic shift, a shift in content, or, as with the previous analysis, a shift in authorship.

Mutatis Mutandis

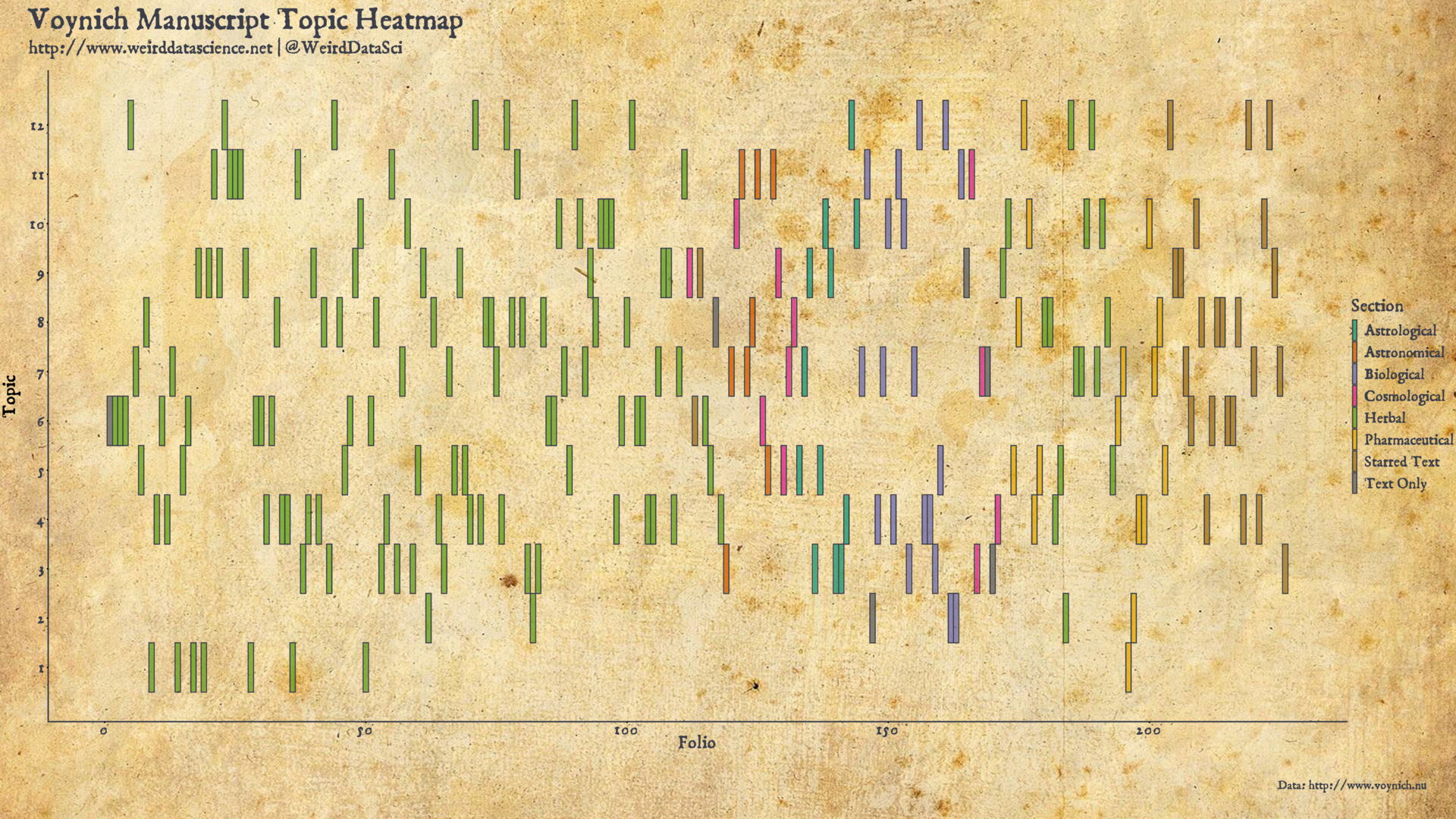

Recalling the topic model from the previous post, and the manually-assigned topics hinted at by the diagrams in the manuscript, Folio 33 might not immediately arouse our suspicions as the most obvious candidate for such a shift in style, falling as it does some way through the manually-identified herbal section, and without an immediately apparent shift in the distribution of topics.

For simplicity of presentation and analysis we will work with the alternative 12-topic model suggested by the metrics in the previous post rather than the 34-topic model initially given there4. The distribution of topics in this model can be presented as those of the 34-topic model were in our previous post.

We might, then, ask whether the distribution of topics from this model itself has a changepoint. Having interrogated the distribution of word length frequencies, we can pose the same questions to the distribution of assignments produced by the topic model. Similarly to above: were we to conceive of the topic model assignment for each page as being the result of the roll of some biased die, is there a notable point in the document where the bias of that die seems to shift?

Framed as such, there is little more required to apply this model to the topic assignments. Our Stan model, dredged from its slumber, merely needs to be provided with the topic model folio assignment data.

The location suggested for the topic model changepoint are surprisingly close to the results of the changepoint for the frequency counts of short words in the Voynich Manuscript. Whilst the changepoint probabilities are somewhat more diffuse in the topic model analysis, the most significant probability mass is centered around Folio 38, with much lower spikes extending out as far as Folio 55 and Folio 30.

In addition to the mutual support that these two analyses provide, it is notable that the major changpoint identified by both falls directly in the earlier portion of the first, major “herbal” section identified manually by Voynich scholars through inspection of the images accompanying the text. This suggests that that first section, at least in terms of textual content, is not as homogeneous as has previously been suggested. Future scholars investigating the structure of the Voynich Manuscript may therefore wish to direct more attention towards the earlier middle of the herbal section, around folios 30 to 40, to identify what dreadful changes may emerge at that point in the text.

We might naturally, but do not here, extend this analysis by shattering further the smooth unity of the Manuscript according to multiple changepoints. Such an extension is, as intimated in the guide, conceptually simple but computationally burdensome, as it requires recalculation of multiple potential distributions across an ever increasing number of parameters. As such, we leave this analysis, and the means to conduct it more efficiently, for the dim future.

Omnia mutantur, nihil interit

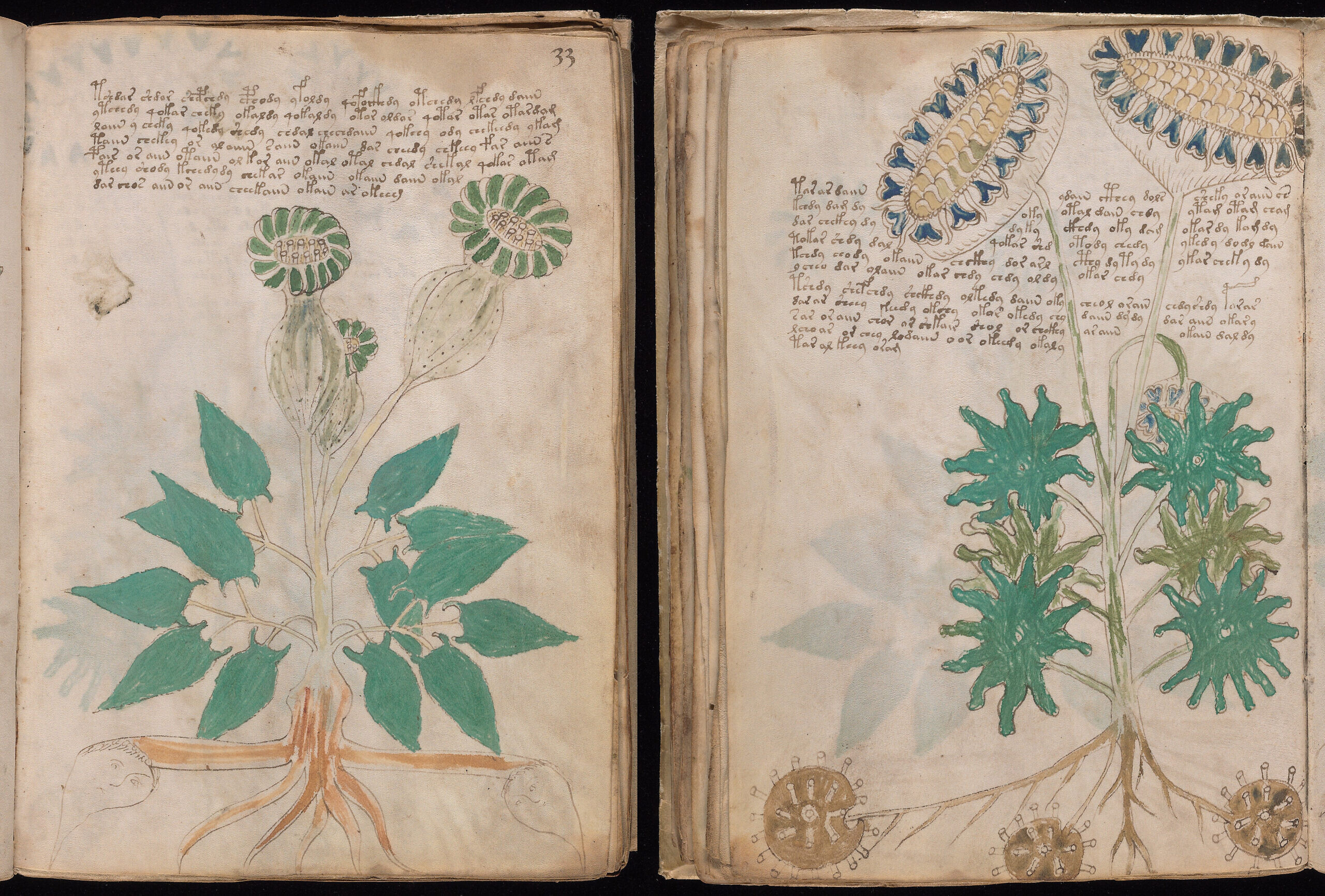

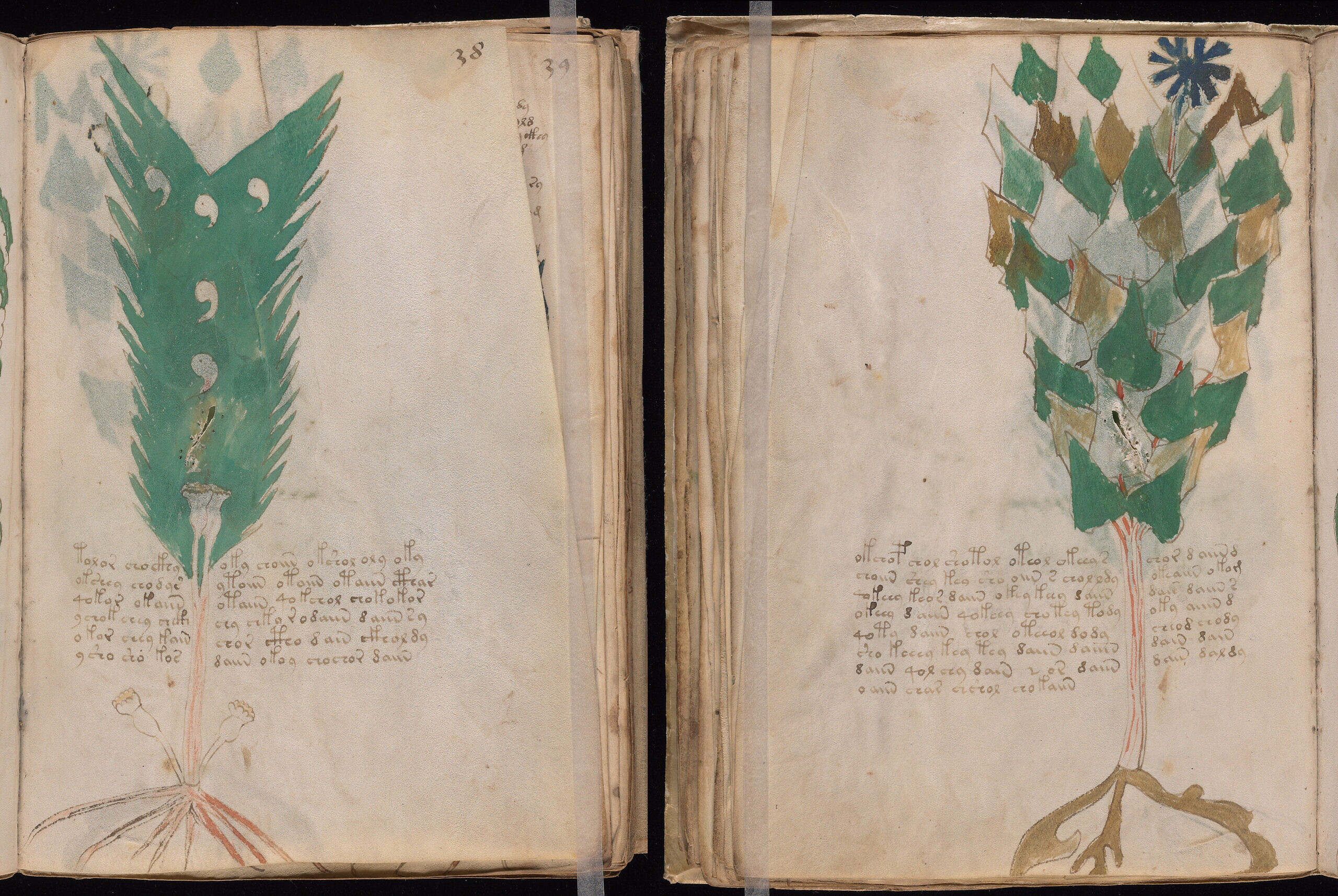

Our analysis has provided us with an abstracted location in the text at which our unsettling suspicions of change lie. The two folios arousing greatest curiosity are therefore Folio 33 and Folio 38, which we present here to reify our horror. It is worth highlighting, however, that the changepoint analysis says nothing specific about these two folios; the model identifies that the inexplicable scrawling prior to the changepoint differs significantly from the maddeningly incomprehensible glyphs following it, nothing more.

The statistical properties that we have uncovered in the Voynich Manuscript over the past four posts reveal something of its inner structure. It supports, but cannot prove, that the Manuscript is not a hoax, and that it the text is most likely drawn from some natural language.

The changepoint analyses in this post are a powerful tool for identifying evolution and mutation in data, and the demonstrated example of stylometric analysis to Martorel’s Tirant lo Blanc support their use in revealing points of fracture in texts, without reference to the source language.

With specific relevant to the Voynich Manuscript, both the word frequency changepoint and the topic model changepoint suggest that the manscript’s contents shift significantly at some point around Folios 30 to 40. Given the previous assignment of topics based on manual identification of images accompanying the text, this presents a new avenue of investigation for Voynich researchers.

We have resisted that tantalising draw of attempts to translate the Voynich Manuscript. The tools we have applied are more broadly statistical and aim at unveiling structures and revealing patterns in the text; whilst they may provide information towards deciphering the text, that particular conundrum is for the future.

There are, as we would always wish, many avenues left unexplored in this particular labyrinth. The topic model is crude, and more subtle disassemblies could well provide a more refined view. The word frequency patterns support natural language, but we have not made any effort to correlate them with known languages. We have treated words as a unit of analysis, but have not looked in detail at the structure of likely prefixes and suffixes; similar words with differing endings are particularly notable in the topic model, and analysis of these could reveal much more than we have dared to attempt.

There could be much to learn from assessing multiple changepoints in the Manuscript. The presentation of the volume certainly supports its composition of multiple disparate sections; perhaps identifying an inexorable sequence of stylistic shifts could unveil still more of this structure.

For now, however, our meandering journey into the dim twilight of the Voynich Manuscript has drawn to a close, leaving us still searching for illumination in the shadows of this most elegant enigma.

Continue to search, in fear of what you may find.

Code and data for this post: https://github.com/weirddatascience/weirddatascience/tree/master/20200220-voynich04-tempora_mutantur.

Footnotes

- As of this post, future work will be accompanied with public code and data available at https://github.com/weirddatascience/weirddatascience. As numerous of our routines, particularly for plot generation, are repeated, future code will also rely on our custom R package:

grimoire. We will also be dredging through our dark history to make the code for previous posts public in the near future. - The marginalisation here is given as a sum, rather than a product, due to Stan operating on the log scale for reasons of computational efficiency and numerical stability.

- This second piece of wisdom, in particular, was provided, along with a host of other points of guidance, via the Stan Forums. Particular thanks are due to forum members @bbales and @emiruz.

- We have reproduced the analyses in this post for the 34-topic model with similar results.

1 Trackback / Pingback

-

照亮被照亮的 - 偏执的码农

This site uses User Verification plugin to reduce spam. See how your comment data is processed.